Visual Studio Code and AWS

Using VSCode to improve your AWS coding experience

Posted by Steven Tan on 5th April 2022Introduction

Development is a lengthy exercise, referencing StackOverflow, remembering concepts but not with 100% certainty, writing code that is generic and even configuring your coding workspace. All of these factors add up to hours of time spent being unproductive. I have personally spent countless hours figuring out the exact combination of function calls required to perform a task as simple as "list the security groups for an EC2 instance", time that I would rather have spare.

In this post, I will be outlining tools I use in combination with Visual Studio Code to improve my productivity and sanity. The code examples are in Python as it is my language of choice, but the contents will be just as relevant for NodeJS and any other language.

What is Visual Studio Code? Visual Studio Code is a free and open source code-editor with a powerful plugin ecosystem that caters for most modern programming languages.

It is also one of the few Microsoft products I will go out of my way to recommend...

A reference to how it feels when Microsoft asks you how likely you are to recommend Windows 10 to your friends / colleagues

Anyways, let's go straight into the tooling and plugins I recommend for use with Visual Studio Code.

AWS Toolkit

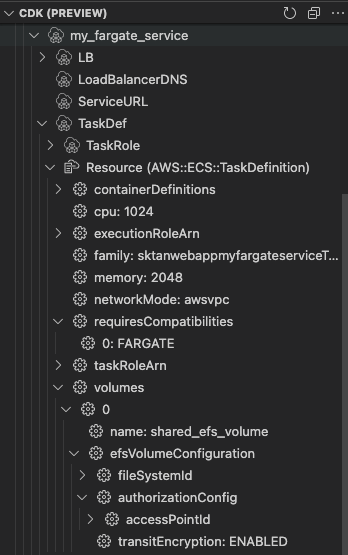

The AWS Toolkit is an open source plugin that simplifies writing, debugging and deploying code on AWS. The biggest reason I use this plugin is for the Cloud Development Kit (CDK) integration when building my CloudFormation Stacks. What is CDK? Taken directly from AWS, "CDK is an open-source software development framework to define your cloud application resources using familiar programming languages".

Despite being an AWS developed abstraction layer, there are some rare issues when attempting to build resources that work on CloudFormation. Sometimes these are edge-cases, but other times they are features that you would expect CDK to natively support or not outright get incorrect. An example of this can be found in this unresolved issue of CDK using incorrect EFS casing for ECS Volume Configurations. There are many instances where CDK generates incorrect output which needs to be resolved via escape hatches or raw property overrides

An example of this that I recently had to implement:

# Attach an EFS volume to my Fargate containers

ecs_service.task_definition.add_volume(

name="shared_efs_volume",

efs_volume_configuration=ecs.EfsVolumeConfiguration(

file_system_id=shared_efs.file_system_id,

transit_encryption="ENABLED",

authorization_config=ecs.AuthorizationConfig(

access_point_id=shared_efs_ap.access_point_id

),

),

)

ecs_service.task_definition.default_container.add_mount_points(

ecs.MountPoint(

read_only=False,

container_path="/var/shared",

source_volume="shared_efs_volume",

)

)

# TODO: Fix this hack since CDK currently outputs "EfsVolumeConfiguration" instead of "EFSVolumeConfiguration" causing Cfn Issues

# Currently being tracked in: https://github.com/aws/aws-cdk/issues/15025

# This also needs to match whatever we put into "ecs_service.task_definition.add_volume"

task = ecs_service.task_definition.node.default_child

task.add_property_override(

"Volumes.0.EFSVolumeConfiguration",

{

"FilesystemId": shared_efs.file_system_id,

"TransitEncryption": "ENABLED",

"AuthorizationConfig": {

"AccessPointId": shared_efs_ap.access_point_id

},

},

)

task.add_property_deletion_override("Volumes.0.EfsVolumeConfiguration")Without the plugin you will need to:

- Synthesise the stack

- Open your JSON template file

- Reverse engineer how the stack was built to

- Figure out the escape hatches and parameter overrides yourself

With the AWS Toolkit, you can use the CDK Preview to interact with the synthesised stack and figure out how the template is being built out:

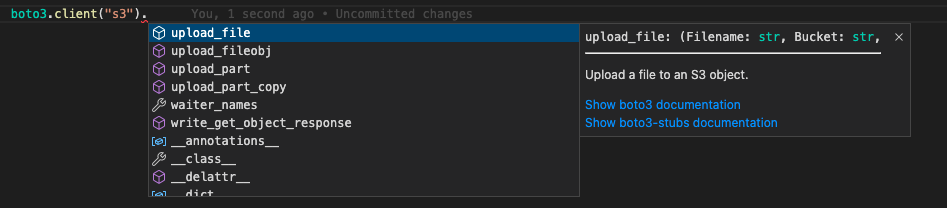

Boto3 Stubs

The biggest issue you'll likely come across with developing using boto3 is that when you call boto3.client or boto3.resource, you get no further clues on how to interact with the AWS APIs. Boto3-stubs simplifies this by automatically generating the documentation for intellisense and type hinting purposes. This saves enormous amounts of time as you no longer need to open a bunch of boto3 documentation tabs just to figure out what methods are available and how to use them. Installing it is as simple as following the boto3-stubs documentation and installing the stubs relevant to you via pip:

# Install boto3 type annotations for ec2, s3, rds, lambda, sqs, dynamo and cloudformation

pip install boto3-stubs[essential]

# Install annotations only for services you use

pip install boto3-stubs[iam,sts]

# Install annotations for all AWS services

pip install boto3-stubs[all]

Dev Containers

The Visual Studio Code Remote - Containers extension allows you to define a container system and use it as your workspace rather than the local system. Using this simplifies dependency management and collaboration amongst maintainers as everyone is now working on the same development environment with any requirements pre-installed. A well known docker meme is "it works on my machine, then we'll ship your machine", but now you are able to ship your machine to other developers. Maintainers are able to include a Dockerfile and some JSON configuration (+ any helper scripts) to the .devcontainer directory within their Git repository and when the codebase is opened, Visual Studio Code will automatically detect this and ask if you would like to reopen the folder in a container:

I've included a standard Python Dev Container definition I use in the 2 sections below:

Dev Container Configuration

The .devcontainer/devcontainer.json file defines your Visual Studio Code containerised workspace configuration:

- The devcontainer that will be built

- Which extensions to install as part of the workspace

- What directories on the local filesystem to mount

- Any environment variables to include

- Any Visual Studio Code features to install as part of the container (e.g. nodejs, Docker in Docker, AWS CLI v2)

{

"build": {

"dockerfile": "Dockerfile"

},

// Install some VSCode Extensions to help with git history, Python, AWS CDK and Github / Jira.

"extensions": [

"eamodio.gitlens",

"ms-python.python",

"ms-python.vscode-pylance",

"amazonwebservices.aws-toolkit-vscode",

"GitHub.vscode-pull-request-github",

"atlassian.atlascode"

],

// Mount the ~/.aws directory to our container so we can use our AWS Credentials

"mounts": [

"source=${localEnv:HOME}${localEnv:USERPROFILE}/.aws/,target=/root/.aws,type=bind,consistency=cached"

],

// Container ENV variables for project specifics (defaults to my sandbox AWS account)

"remoteEnv": {

"AWS_DEFAULT_REGION": "ap-southeast-2",

"AWS_PROFILE": "sktansandbox"

},

"features": {

// Uncomment if you are deploying ECS containers or bundling Lambda via CDK

// "docker-in-docker": {

// "version": "latest",

// "moby": true

// },

"aws-cli": "latest",

"node": {

"version": "lts",

"nodeGypDependencies": true

}

}

}

Dockerfile

The .devcontainer/Dockerfile file builds out our docker environment and allows you to install packages as you would with a standard Dockerfile. I usually include a bunch of utility applications in here that I need or want (e.g. ripgrep for quick text searching, gnugpg2 for signing of my commits, etc).

Visit the microsoft-vscode-devcontainers Docker Hub page if you want a reference to all the other Microsoft maintained images you can use.

FROM mcr.microsoft.com/vscode/devcontainers/python:3.9

ENV EDITOR=vim

RUN apt-get update && export DEBIAN_FRONTEND=noninteractive && \

apt-get install -y vim gnupg2 ripgrep && \

pip install pre-commit

Github Copilot

What is Github Copilot? Github Copilot is an AI tool that helps you write code faster and with less work. Github Copilot uses contextual information from your comments and code, then uses that information to generate code suggestions to implement instantly. This might sound like cheating, but it speeds up the programming process as simple things can be accurately generated automatically via Copilot.

For example, let's say that we want to get a list of all IAM users with access keys older than 90 days via a Python script. Maybe you need this information to send a notification to the individual users that it's time to rotate their keys before it gets deactivated? With Copilot, you can simplify this process by providing it some context helpers (in my case, a method and some simple docstring) and it was able to provide me with code that I can use for this purpose.

Installing it is very simple, search for GitHub.copilot in the Visual Studio Code extensions browser, or install it directly from the Visual Studio Code website.

Disclaimer: It's only a matter of time before the AI attempts to take over your job and then the world, please review the code it suggests before running it